The Monetisation Gap Of Artificial Intelligence.

Photo by Tobias Reich on Unsplash

Article Authors: Mihail Gaydarov, Spas Arsov.

History of Artificial Intelligence

Artificial Intelligence is a term that dates back to 1956 where it was first introduced by the Dartmouth Conference. At the time the idea for a generative algorithm was rather primitive, researchers believed that machines had the potential to mimic human intelligence. The early stages of artificial intelligence were marked during the 1950s and 1960s, where the focus was on creating algorithms that aimed to reproduce human reasoning through a strict set of rules. In other words, these so-called simple algorithms were effectively manipulated to impersonate human cognition. Data suggests that in 1956 there was a rapid growth projection for this new technology, afterwards funding was cut by almost 50% in the U.S and UK, leading to the upcoming decades of stagnant growth for AI.

The next large step in the creation of what we now know as the product of artificial intelligence was the 1970s and the 1980s. This period is marked by experts as “The AI Winters”, artificial intelligence was met by large stagnation, as a consequence of poor commercial returns on expert systems. By the 1980s, around $1 billion in annual investments failed to deliver on the promises that pioneers in the field gave. Of course, it is important to note that computational power was one of the main reasons for the failure of this initial project. Machines were simply not powerful enough to sustain the projects and the development of AI.

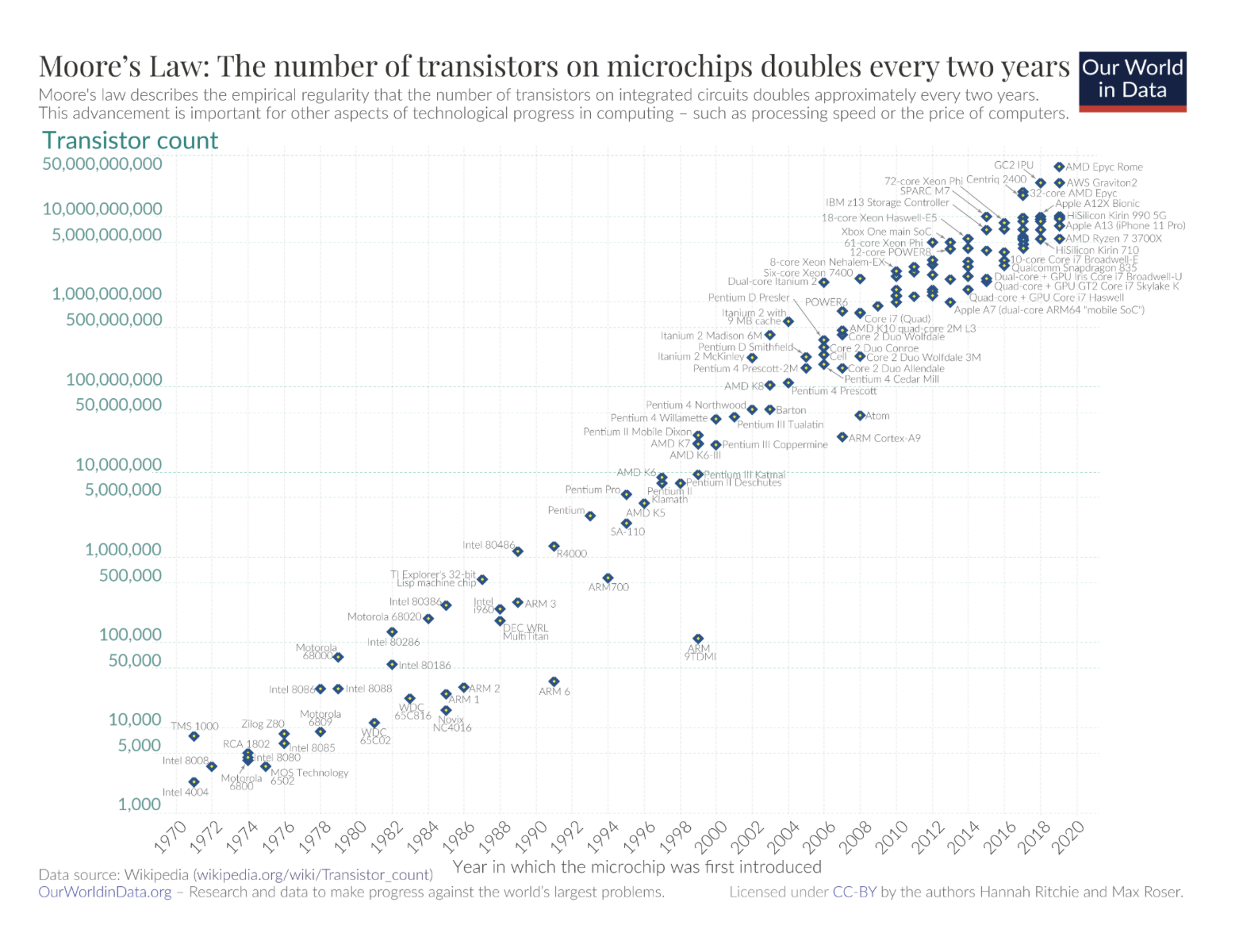

During the 1990s this was completely overturned, with the introduction of more powerful computer hardware. AI experienced a massive shift towards Machine Learning, or in other words, self-development. Furthermore, the data models were much more sophisticated and promising, in comparison to the previous, hard-coded algorithms, that relied on coded rules to produce output. To support the claim of increasing computing power during the 90s is the Moore’s Law of dynamics. This law created by an Intel co-founder states that the number of transistors on a chip doubles approximately every two years, while the cost of computers is halved. Numbers show that The Global Computing Capacity roughly doubled every 18-24 months during the 1990s, which was a significant step towards materializing modern AI.

Additionally, a key milestone for generative models such as AI was the chess game between Garry Kasparov, one of the most legendary chess champions in the world, and a chess algorithm created by IBM, known as the IBM Deep Blue. It defeated Kasparov in a game of chess, demonstrating the increasing real-world capabilities of Artificial Intelligence. During the game IBM’s algorithm analysed 200 million positions per second, creating a clear brute-force advantage over a traditional human chess player.

The 2000s and 2010s are a period that marked the most significant development for generative AI, mostly due to the introduction of Big Data and even more computational power. This enabled the development of neural networks and the primitive versions of deep learning architectures. Major breakthroughs caused by deep learning improved performance in areas like image recognition, natural language processing and autonomous systems. Training computing for AI models rose by 300,000x for the same time period. Also, data creation grew from an estimate of 2 zettabytes in 2010, to 120 zettabytes in 2023. Without a doubt, this is the most important period for the development of modern Artificial Intelligence, where we can observe a clearer direction for the architecture behind AI and notable interest from market investors.

Economic Implications of AI Development

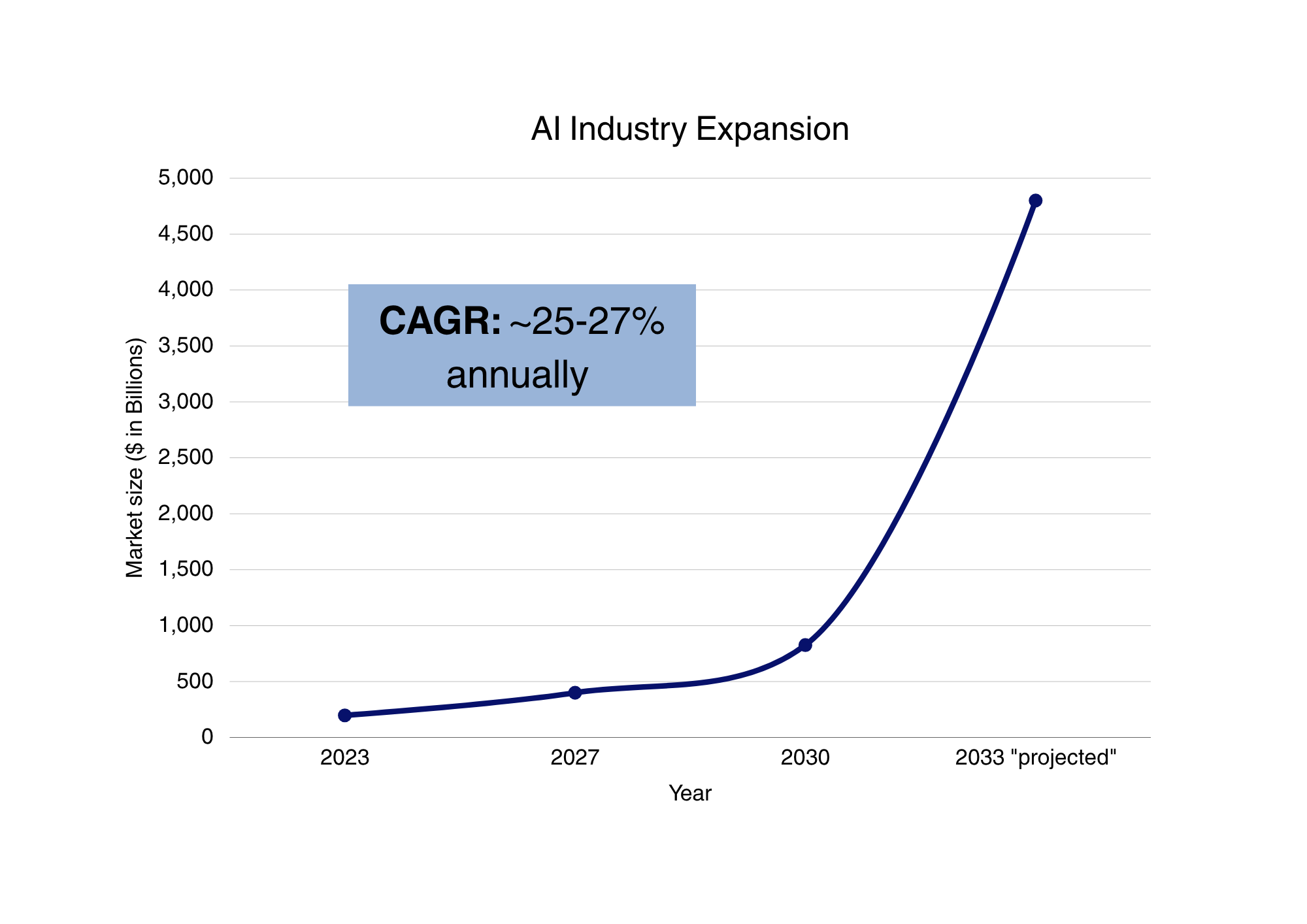

There is no doubt that artificial intelligence has fundamentally altered the perception of the world towards careers and overall economic development. Data from sources like the European Parliament Research Service (EPRS) quote figures of +14% in Global GDP Growth by 2030 as an effect of the introduction of AI. Perhaps, the most crucial area that artificial intelligence has affected is the productivity and efficiency within companies. Generative algorithms have shown to improve aspects of a company’s operation such as overall efficiency, a reduction in human error margins, and speed/accuracy . The economic effects behind this push towards AI integration in jobs has led to a major decrease in production costs and a higher output per worker unit. This efficiency boost correlates to an increase of about $15.7 trillion to the global economy, in about a decade. When we discuss the overall productivity of a worker, of course dependent on sectors and general activity of an enterprise, we observe a 40% mean increase.

An additional perception is the usage of AI as a General-Purpose Growth Engine. More specifically, AI is seen as a general purpose technology. The wide-spread integration leads to higher production efficiency as a result of automation and optimization. Furthermore, enhanced decision-making through large-scale data analysis also aids enterprises in the segment of objective data analysis. Therefore, we can make a safe argument that the short-run economic impacts of artificial intelligence are mostly positive.

Source: TFR Design.

On the other hand, we also have to consider the negative implications due to the introduction of AI. The most effective argument to be made here is the replacement of jobs by artificial intelligence. Although it is unlikely that we are going to observe a complete replacement of human labour on most fronts, the argument to be made here is the significant trend of automating low-level jobs. To support this claim, research from the EU states that about 14% of jobs are entirely automatable. As of Q3 2025 the EU has a labour force of roughly 198 million, employed individuals and over 12 million unemployed. If we were to calculate the amount of possibly automatable jobs that equates to 27,7 million people (not counting unemployed individuals). As large as this number appears to be, there is other even more pessimistic data that supports this claim. 32% of jobs in the EU are bound to be significantly transformed/altered by AI. Thus, nearly half of the jobs in the EU are going to be affected at some point by artificial intelligence - either through full automation or notable change. For the sake of objectivity, we would like to believe that the numbers are far higher than the ones quoted from this conducted study in 2019. With the further development of generative and integrated AI, these percentages are most likely to go even higher.

More relevant sources like the BIS Bulletin, on Economic Impacts of AI from 2026 - quotes figures as high as 65% improvement in worker productivity and as low as +10%. Economy-wide Total Productivity is projected to increase at a range of +0.07% to +0.9% per year. Crucially, the data quoted in this study is also very skewed and relevant only from sector to sector.

A further point to be made here, derived from the application of AI is wage polarisation. With the integration of AI into corporate environments, far fewer workers are required to operate one task, resulting in a higher compensation for high-skilled workers, and lower ones for low-skill workers. As a result, the capital share of income is expected to increase relative to labour - a shift towards specialized human capital.

Artificial intelligence presents clear productivity and growth potential, with strong firm-level gains and optimistic projections for global GDP. However, these effects do not fully translate at the macro level, where productivity improvements remain modest and uneven. At the same time, AI is reshaping labour markets rather than replacing them entirely, contributing to job transformation and wage polarization. Overall, AI should be viewed as a gradual and uneven economic shift rather than an immediate, universally transformative force.

Taken together, these findings provide the foundation upon which the central thesis of this research is developed:

Artificial intelligence represents a significant technological innovation with clear potential to enhance productivity across industries. However, the rapid surge in demand, coupled with the proliferation of AI-focused firms, has introduced substantial concerns regarding the credibility and sustainability of prevailing business models. This saturation of the market, often characterised by speculative positioning and unclear value propositions, undermines trust in the sector and raises questions about whether current valuations and practices reflect genuine long-term utility or short-term opportunism.

The AI Revolution (Post 2022)

From AI text generation to automated milking robots in cow farms the industry has come a long way. After the COVID-19 pandemic with the release of ChatGPT 4 on March 14, 2023, barely two years ago, the world saw a dramatic switch from manual mundane task completion to an increased automation in hands of ‘normal’ people. Not to say that the world did not understand the words “machine learning” or “automation”, but population had the habit to focus on everything themselves to the point that repetitive tasks and researching were a significant time-consumer, which was then substantially changed by the introduction of LLMs (Large Language Models) for more efficient time management.

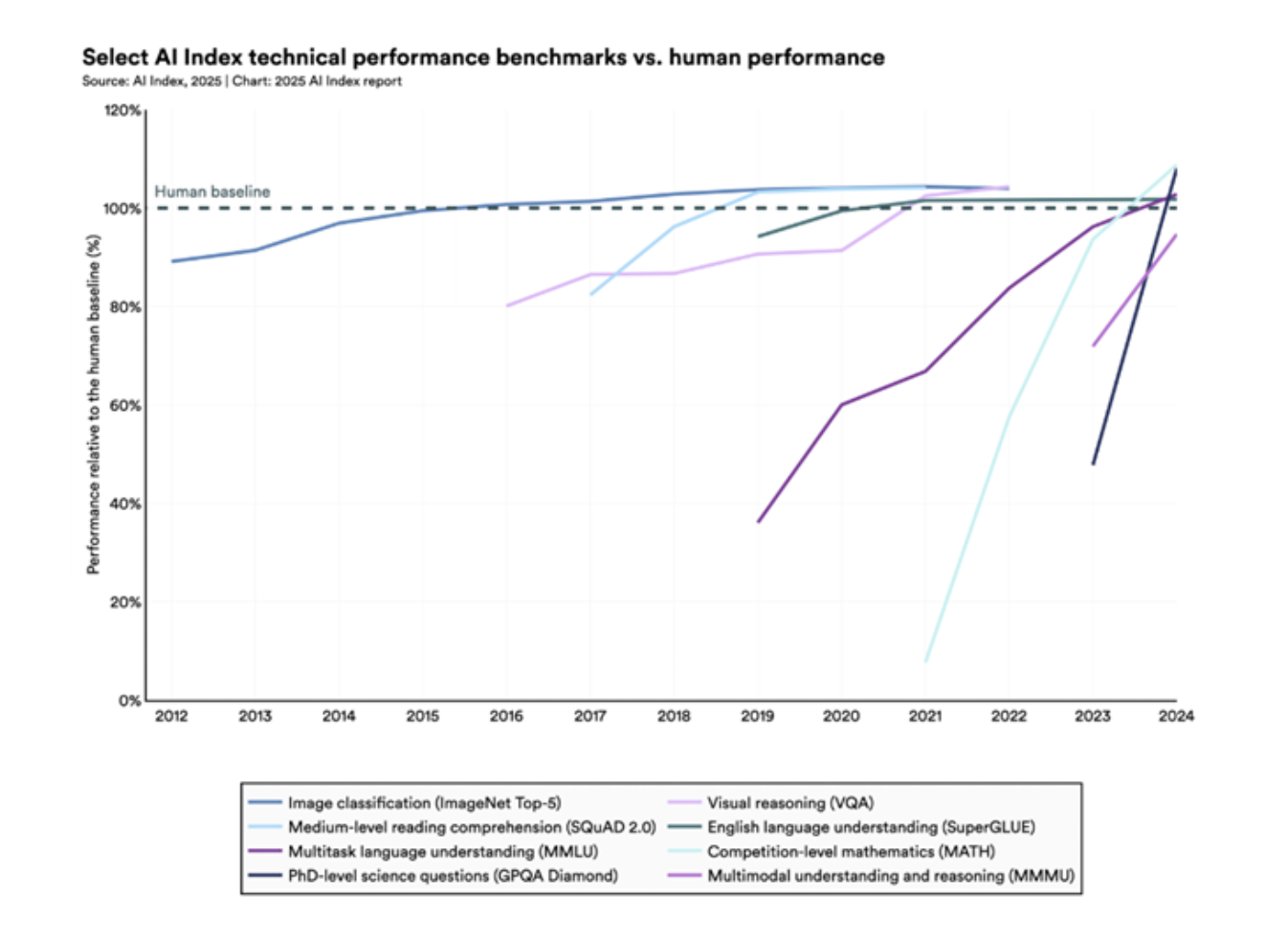

AI has never been perfect, but in 2023, researchers introduced new benchmarks to stress-test different LLMs and see how advanced they were in fact, with examples being MMMU (Massive Multi-discipline Multimodal Understanding), GPQA (Graduate-Level Google-Proof Q&A Benchmark), and SWE (Software Engineering Benchmark). Within one year of the introduction of these benchmarks, an increase in overall performance between around 19% and 67% has been identified. Artificial intelligence nowadays outperforms humans in certain tasks, for e.g. the timely solution creation of programming tasks with limited budgets and excels in certain disciplines such as high-level mathematics. Despite that AI continues to struggle with complex reasoning benchmarks like PlanBench and often fails to reliably solve logic tasks even when provably correct solutions exist.

AI business usage has been accelerating rapidly with a 23% increase in 2024 from 55% to 78% with further increases expected to be reported in the coming 2026 AI Index Report on 13 April 2026. What began as a research-first approach and specialist-only use cases exploded in popularity with an extreme wave of ‘Mass Customization’. Before 2023 the AI Revolution was constrained mostly to research, deep learning, as well as image recognition since the mid-2010s, but with generative artificial intelligence the narrative has changed both literally and metaphorically. Now AI-driven R&D and implementation into the workflows of companies have become a key driver towards a revenue upside. Longitudinal data shows that companies investing in AI experience approx. 2% additional sales growth including a 2% increase in employee count annually compared to the ones not investing in it.

Furthermore, new opportunities have emerged for companies and individuals to implement further AI capabilities even in sectors not traditionally considered easy to automate. In the DACH region (Germany, Austria, Switzerland) extreme cases have been achieved due to labour shortages and expensive workforce. For instance, a farm in Austria managed only by one person, the owner, takes care of 170 cows by fully automating food preparation, delivery to the livestock, feeding, milking and hygiene with robots as an initial investment of around €100,000 each, which pay off fast due to the acute agricultural situation in Western Europe. A similar example could be stated in Germany where an engineer working on his family’s farm develops an optical solution for weeds, which are being burned by satellite guided and AI-driven laser beams mounted to the tractor. With this technology the company distinguishes weeds from crops through a multi-layer image recognition system, which could with precision differentiate between weeds in all shapes and sizes and the crops, which need to grow undisturbed.

Despite the positivism an unhealthy trend emerges where extremely high expectations develop and the technology could not keep up with the investment.

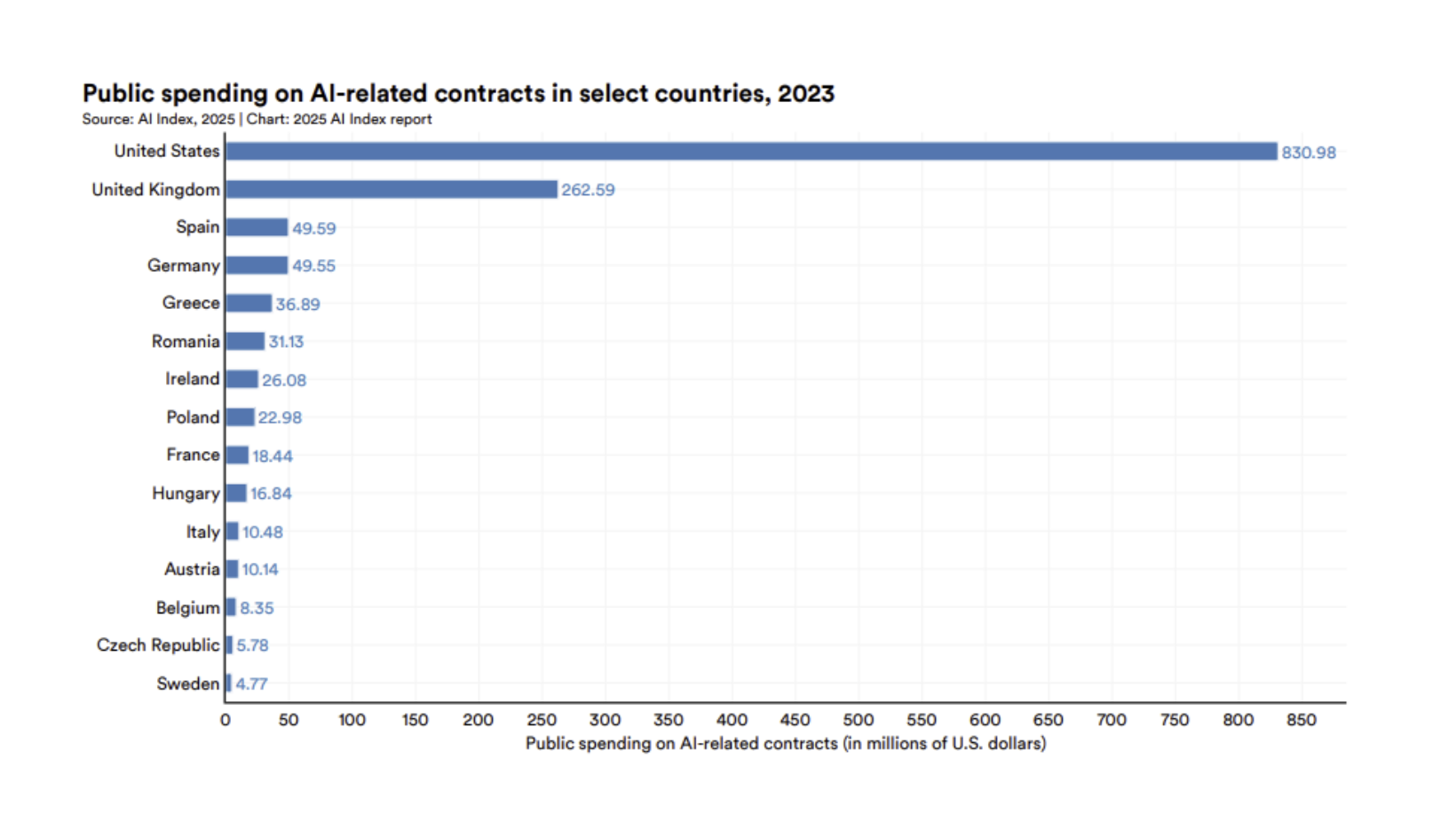

The Industry Expectations Against the Economic Reality

A new economic model develops with AI being in the centre of it. In 2024, U.S. private AI investment reached $109.1 billion. National governments are investing at massive scales, highlighted by France's €109 billion commitment, Saudi Arabia's $100 billion initiative, and China's $47.5 billion semiconductor fund. AI investments directly drove product innovation, resulting in a 13% increase in trademarks and a 24% rise in product patents. AI hardware costs have declined by 30% annually, and energy efficiency has improved by 40% each year. Model development is accelerating dramatically, with training compute doubling every five months and training datasets doubling every eight months.

All that is to say before we take into consideration the diminishing returns of exponential resource growth. Even in 2024 despite these massive increases in training resources, the performance difference between the number one model and the 10th-ranked model shrank from 11.9% to 5.4% in a single year with newer landscape in 2025 showing us how marginal leads between the world’s top models make the AI race even more relentless and constrained to the physical limitations of efficiency. While we are on the ground some companies and individuals such as Google, Elon Musk, and Jeff Bezos are actively pursuing a space-based data centre approach with R&D flowing in that direction. With recent scandals with the Pentagon, which showed to what scale AI companies are willing to take advantage of a purely scandalous situation and leave morals aside for the pure capitalist approach, the people saw how easy it was to lose the faith of their customers as a mass migration from one LLM to another became easier than ever mentioning the Anthropic-Pentagon saga, which is still ongoing.

With this coming into play we ask ourselves whether it is money well spent. In the end of the day circularity could be identified among companies such as Nvidia, OpenAI, and Oracle. Structural dependencies on companies such as ASML and TMSC are observed as markets become increasingly volatile every time microchip supply chains are at risk, which is also why the U.S. administration never fully implemented tariffs in that sector due to the powerful tech giants’ lobby. While volatility shapes the sector and diminishing returns with the increasing R&D, data centre development costs, and supply chains at risk in an increasingly polarised world are considered, we start seeing why the foundations of the sector are often questioned.

Unsustainability in the business model of AI Companies

Building on the previously mentioned diminishing returns, the scale and resources required to train competitive AI models are growing exponentially, with training compute doubling every five months, datasets doubling every eight months, and power consumption doubling annually. This has created a structural issue with models being thought of on synthetic data as we run out of real one to use as a resource. Continuing to follow the trend, it also outlines the need for better AI infrastructure to handle the increased capacity requirements by users and organizations. In spite of all efforts, unfortunately, commercial issues arise with businesses experiencing difficulties implementing the new workflows, which artificial intelligence makes possible.

In 2026 47% of retailers consider AI core to their business, many suffer from "proof of concept fatigue", meaning the growing negative sentiment amongst organizations due to endlessly testing AI solutions without moving them into production, implementing them, or realising tangible ROI, which rate currently sits at around 46%. Most AI projects in companies tend to stall in their pilot phase and require a high initial investment without a clear implementation strategy. Mass layoffs in certain companies driven by the desire to replace salaries with AI implementation were forced to rehire, acquiring extra HR costs due to insufficient and inefficient results. Only around 5% of executives currently report that they are seeing a widespread value from the AI initiatives.

This does not stop AI companies from wanting to expand even more rapidly despite the waning sentiment and lower ROI for businesses. Global investment in AI infrastructure is projected to exceed $7 trillion over the next decade. This highlights the need for critical infrastructure such as data centres, cooling, power grids, telecommunication networks, optical cables and much more. Critical thinking regarding the business models of these market leaders should always be applied as some companies such as OpenAI act as tremendous money burners despite revenue beating expectations with around $13 billion for 2025 and lower expenses coming to just $9 billion. Overconfidence could prove economically painful with the company’s $1.4 trillion of infrastructure commitments or 30 gigawatts of computing resources enough to power 25 million U.S. homes. With 2026 being a crucial year for OpenAI due to funding hardships, their plan for a $1 trillion IPO could prove make-or-break for the AI giant in the global AI race as competitors close in fast.

Competition in the AI Market

It is undeniable that “The Big 4 of AI” in the U.S., i.e. OpenAI, Alphabet, Anthropic and xAI, are in constant competition for the AI throne and alongside the ‘Pentagon drama’ it was discovered how intolerant a user base could be when one of the AI giants wants to profit from the ethical boundaries of another. Despite that the business model of Alphabet is decisively the one, which is most self-sustaining with them producing their own TPUs and powering their own data centres with strong proven revenue. It is diversified and not based purely on AI-token or AI-subscription revenues limiting financing options against a backdrop of bold expectation and infrastructure commitments for private companies such as OpenAI and Anthropic. Even recently in March a feature was implemented in Gemini, which is the same as in Claude, where users could easily ‘move out’ from ChatGPT and Claude as well as to bring their data directly into Gemini so that they can migrate to the competition.

Even though the United States houses some of the global AI giants, not all significant models are located there. The 2024 January and subsequently February stock market crashes happened after China unveiled their state-of-the-art model, DeepSeek, which offered direct competition to other LLMs such as ChatGPT at that time forcing the world to rethink OpenAI’s position as the one and only ‘AI king’. Not only Asia, but also Europe has been developing critical AI infrastructure through its “AI Factories”, though sometimes in cooperation with American tech giants such as Alphabet. The French LLM, Mistral AI, has become the undeniable market leader in the EU despite it focusing on different results in comparison to the ‘1+4’ Sinno-American competition as it highlights a cost-effective API usage, rapid responses, flexibility through open-weight models as well as data privacy based on the EU’s AI Act as the first international AI regulation framework.

With this it could be said that despite the market fragmentation specializations and different use cases could be derived from the competing LLMs partly satisfying investors’ expectations in the short term.

The Inflating AI Bubble

The “DeepSeek Crack” (January 2025) - External Pressure.

On January 27th 2025, a Chinese firm by the name of DeepSeek introduced a new generative model similar to American legacy ones such as ChatGPT, Gemini and others. On that same day, the American stock market suffered some of the most significant losses in years with large companies like Nvidia losing $593 billion of their market cap - just in a single day. This crumpling in stock price is to this day the largest single day stock drop in the history of the GPU manufacturer. Most prominently, the damages were felt in tech and AI sectors. The Nasdaq composite index lost 3.1% and similarly the Philadelphia Semiconductor Index, suffered a 9.2% reduction in value (The largest drop since 2020). On the topic as to why this happened, and why this event is so important in the inflation of the so-called “AI Bubble”, we consider this the mark of first cracks in the traditional corporate model for artificial intelligence development. At its core, DeepSeek was a standard generative model like any other from the U.S., but the key difference is the introduction of low-cost AI modelling. While the model provided high performance, and accuracy the development cost of it was significantly lower than American counterparts. The introduction of “DeepSeek” challenged the “Capital Moat” Thesis in ways which were unseen in the industry, prior. Put simply, the “Capital Moat” Thesis gravitates around the idea that only firms with massive compute budgets can compete. As it turned out these perceived barriers for entry into the AI industry were broken in a single day.

This event was so important in the development of the AI speculations, because it was the first time the AI narrative was challenged and the assumptions were broken. Originally, to progress in AI accuracy and scale it equated to exponentially rising computing costs. Furthermore, the efficiency and speed of the algorithm showed the industry how even an early test model, and first time models can be efficient, rather than at scale. AI could be commoditized faster than expected. On the topic of why markets reacted the way they did, we cannot say that this new business model of efficiency over scale was the only reason. The principle is simple: if AI models are cheaper to train and run in the future, the demand for high-end GPUs becomes less certain. Therefore, if this option of efficiency was possible and the constant development of newer and better hardware for training AI was not required, this signaled to investors that the future of AI hardware might not be so bright. Also, it gave uncertainty regarding the Return On Investment (ROI) on data-center investments. As a result of that, the valuation for related sectors like chipmakers, cloud providers, and AI infrastructure companies compressed. As to the significance of this event, regarding the rising “AI Bubble” we can interpret it as a shock caused by an alternative model, rather than a bubble burst. The model of the industry being repriced in real time.

The “Valuation Uncertainty Phase” (Feb-April 2025) - Internal Market Correction

Following the DeepSeek repricing event, a stage of valuation uncertainty began to arise within investors. Although this stage was far less dramatic and touched upon by the media, it was a structurally much deeper issue.

As we already established, the trigger of this period of uncertainty was the introduction of the efficient, accurate and a much cheaper DeepSeek algorithm. Investors began to question if infrastructure wasn’t overbuilt, if there were actual cash flows from these investments, and how long until artificial intelligence pays back the capex. The data during this period shows that large corporation expenditures on AI were record high. Microsoft alone had an AI capex of roughly $80 billion in 2025. Metaplatforms were in the estimates of $60-65 billion and Alphabet at about $75 billion. Essentially, capital expenditures increased a lot faster than the proven returns on the investments made by these enterprises.

It is also very important to note that the introduction of DeepSeek was the catalyst to this event, rather than the whole picture. Sign of hesitations began to appear before the unveiling of DeepSeek. AI pioneers like Microsoft pulled back from about 2GW of data-center projects, while service companies like Amazon paused some of their data-center leasing discussions. Overall, this wasn’t a major collapse of demand, but better classified as uncertainty about the timing and the function of the preestablished model.

Afterwards the market reacted rather abruptly. Alphabet stocks fell in the range of 8-9% after earnings. Additionally, AI-exposed equities became highly volatile, especially when trading began on earnings credibility, rather than the narrative of future AI value. The main structural driver of this market correction is the significant monetization gap. Even though, in recent years there has been a very significant push towards the adoption of AI into various classes of goods and services, there are still very few firms that generate stable profit in the industry. McKinsey & Company identified in their 2024 report that a mere 1% of companies could be classified as “AI Mature”. In 2024, 70-80% of companies on the market use artificial intelligence in at least one of their functions, while just 1% of them had AI embedded into their operations and it generated real economic value (AI Maturity).

Besides, infrastructure overhang risk also poses a significant risk to the market and can be identified as a structural driver. These huge investments in areas like High-performance, specialised AI GPUs, Data Centers, and Energy infrastructure were scrutinised by the potential lack of need for them. This underutilised capacity gave way to speculation for failing returns.

A final point to this event is the pricing pressure caused by the introduction of DeepSeek. Other AI models entering the price competitions pose a significant risk for these under-optimised large-scale corporations, which rely on raw power. As an example the Chinese model cutting costs for development and training by up to 99% put significant pressure on the traditional model. As an implication to this, margins compressed materially and weakened long-term value expectations from investors.

The Expanding Frontier of Artificial Intelligence Capabilities

Diminishing returns in the further development of AI reliability, consistency, and accuracy will always be a fact, although the implementation frontier’s expansion is up for grabs. People now have instruments in their disposal and what they need to do is to actually learn how to use them. An interesting use case could be interpreted in one of the most influential industries in our society, namely private equity, and to a similar extent alternative investments for banks such as modern infrastructure.

In 2026 the era of cheap debt and revenue-multiple expansion is largely over due to inflationary pressures, global uncertainty, and supply chain risks due to wars and isolationism, which have forced us to rethink how we create value. Now it is driven by Operational Alpha by directly improving the target company’s P&L through technical and strategic intervention. Nowadays, it is achieved through a mix of two main strategies – one is AI integration for operational efficiency and lowering costs and with the other one being the “Buy-and-Build” strategy of acquiring smaller add-on companies to clear the competitor landscape and consolidating the market leader’s position as the acquirer and the dominant one. This strategy is called “Intelligence-Led Consolidation”, which creates a synergistic effect where the total value is significantly greater than the sum of the parts due to extra AI operational integration after the M&A synergies are considered. The multiplier effect affected by AI integration hedges against the risk of “dis-economies of scale”, i.e. integration friction, fragmented data, and administrative bloat, which accelerates synergy realisation, strategically repositions the company as an intelligence-led market leader, and reduces integration risk.

Artificial intelligence could be deployed in similar instances of a company takeover with the strategy to further implement it into the technical development of the product or service with examples being engineering efficient materials, structures, and designs to create state-of-the-art projects slashing development time and skill risks.

With AI agents being ‘hot’ right now, we cannot fail to mention OpenClaw, which is an open-source autonomous AI agent platform designed to perform tasks by taking control of the user’s computer systems. The project was so successful that the creator, Peter Steinberger, an Austrian developer with the world’s fastest growing Github project. With Steinberger being a new part of the OpenAI team he will work directly to build agents designed for non-technical users, leveraging the lab's frontier models and unreleased research. But back on the topic of AI agents, it is safe to assume that a “post-prompt era” will be the end goal of AI based on recent leaked reports of the source code of closed-source Claude from Anthropic, which revealed an autonomous daemon mode, called KAIROS. This is an agent, which runs 24/7 in the background and learns from the person’s habits, tasks, and needs as it starts to execute tasks on their behalf without them having to ask it as it already knows what they want. For instance, someone could be asleep when their website goes down due to an unexpected error and instead of the website being down until they wake up and the daemon mode agent understands that the website is down, it boots it back up and then notifies the person of what it has done. Both terrific and terrifying.

After all, the end goal for some people, such as Sam Altman, will always be to create AGI (artificial general intelligence), a theoretical type of artificial intelligence that matches or surpasses human capabilities across virtually all cognitive tasks. By creating AGI and fusing it with an agent-style daemon mode the opportunities could be endless. Nevertheless, the threats to the global economy and to humanity in fact are just as many if precautions are not considered early enough.

Conclusion

At its current stage artificial intelligence is neither a speculative illusion nor a fully realized economic revolution. The technology can be considered as a structurally transformative one, that is deployed in a capital allocation framework that remains deeply inefficient.

The evidence throughout this analysis points to a clear divergence. At the firm level, artificial intelligence delivers measurable gains in productivity, cost efficiency, and operational scalability. However, at the macro and market level, these gains fail to translate proportionally into realized returns. Capital expenditures are expanding at a pace that far exceeds monetization, creating a widening gap between expected and actual value creation. Further, while early-stage data remains incomplete, directional trends are sufficiently clear to support our thesis that artificial intelligence is a significant technological innovation that currently holds unstable future economic value.

The aforementioned divergence lies at the core of the current AI mispricing. Markets have priced artificial intelligence as a linear function of exponential investment, assuming that scaling compute, data, and infrastructure will inevitably produce commensurate economic output. The DeepSeek repricing event and the subsequent valuation uncertainty phase challenged this assumption directly, demonstrating that efficiency—not scale—may become the dominant competitive variable. As a result, previously assumed barriers to entry weaken, pricing power compresses, and the long-term return profile of AI infrastructure becomes increasingly uncertain.

At the same time, dismissing artificial intelligence as a bubble would be analytically incorrect. The technology continues to expand its application frontier, particularly in areas such as operational optimization, decision-making, and intelligent automation. Over the long run, these capabilities are likely to reshape cost structures and competitive dynamics across industries. The issue is not the absence of value, but the timing and distribution of that value.

The current phase of the AI market is therefore best understood as a repricing process rather than a collapse. Capital is being re-evaluated, expectations are being recalibrated, and business models are being stress-tested against economic reality. In this environment, the key determinant of long-term winners will not be scale alone, but the ability to convert technological capability into sustainable and measurable cash flows.

For investors, the implication is clear: exposure to artificial intelligence should be approached with selectivity rather than thematic optimism. Firms with diversified revenue streams, clear monetization pathways, and disciplined capital allocation are structurally better positioned than those reliant on continuous external funding and speculative infrastructure expansion.

Artificial intelligence will likely define the next era of economic development. However, the path toward that outcome is neither smooth nor uniformly profitable. The current market is not misjudging the importance of AI—it is misjudging the efficiency with which that importance can be translated into economic return.

Bibliography

1. Institutional Reports and Core Data

McKinsey & Company (2023).

The Economic Potential of Generative AI.

PwC (2017).

Sizing the Prize: What’s the Real Value of AI for Your Business and How Can You Capitalise?

International Monetary Fund (2024).

AI and the Future of Work.

Goldman Sachs (2023).

AI and the Global Economy.

Stanford Human-Centered AI Institute (2025).

AI Index Report 2025.

https://hai.stanford.edu/assets/files/hai_ai_index_report_2025.pdf

Stanford Human-Centered AI Institute (2025).

AI Index Report 2025 – Policy Highlights.

https://hai-production.s3.amazonaws.com/files/hai-ai-index-2025-policy-highlights.pdf

Stanford Human-Centered AI Institute (2025).

AI Index Report (online portal).

2. AI Industry and Market Growth Data

UNCTAD (2024).

AI Market Projections.

International Data Corporation (2024).

Worldwide Artificial Intelligence Spending Guide.

Statista (2024).

Artificial Intelligence Market Data and Forecasts.

3. Academic and Policy Research

Institute for Policy Research at Northwestern University (2025).

The AI Revolution.

https://www.ipr.northwestern.edu/news/2025/the-ai-revolution.html

Brookings Institution (2025).

The Effects of AI on Firms and Workers.

https://www.brookings.edu/articles/the-effects-of-ai-on-firms-and-workers/

World Economic Forum (2024).

Future of Jobs Report.

OECD (2024).

AI and Employment Outlook.

U.S. Bureau of Labor Statistics (2024).

Productivity and Technology Data.

4. Adoption, Execution, and Reality Gap

MIT Sloan Management Review (2024).

Why AI Projects Fail.

Harvard Business Review (2024).

Artificial Intelligence and Execution Challenges in Firms.

Fortune (2025).

AI companies face execution challenges and workforce fatigue.

https://fortune.com/2025/06/11/ai-companies-employee-fatigue-failure/

5. Financial Press and Market Analysis

Financial Times (2025).

AI valuation and market structure concerns.

https://www.ft.com/content/648046c1-7fcd-43fb-819b-841f104396d9

Reuters (2025).

Business leaders agree AI is the future—but execution lags.

https://www.reuters.com/business/business-leaders-agree-ai-is-future-they-just-wish-it-worked-right-now-2025-12-16/

Reuters (2026).

Future AI will be built on infrastructure constraints.

https://www.reuters.com/technology/future-ai-will-be-written-nuts-bolts-2026-01-28/

Reuters Breakingviews (2026).

Energy shocks and risks to the AI boom.

https://www.reuters.com/commentary/breakingviews/how-energy-shock-could-derail-ai-boom-2026-03-19/

Reuters (2026).

AI growth versus debt constraints in major economies.

https://www.reuters.com/world/europe/ai-boom-will-be-no-free-pass-debt-laden-major-economies-2026-02-27/

Bloomberg (2025).

AI valuations and capital market dynamics.

6. Industry and Consulting Perspectives

KPMG (2025).

AI Frontiers Report.

https://plus.reuters.com/kpmg-ai-frontiers/p/1

7. Academic and Technical Literature

National Bureau of Economic Research (2024).

Artificial Intelligence and Productivity.

arXiv (2024).

AI Scaling Laws and Model Development Papers.

Journal of Economic Perspectives (2024).

Artificial Intelligence as a General-Purpose Technology.

8. Company-Level Case Studies

Amazon (2024).

AI in logistics and operations.

Google (2024).

AI in search and advertising systems.

Meta (2024).

AI-driven advertising optimisation.

9. Independent Technical Commentary

Peter Steinberger (2026).

OpenClaw: Reflections on AI limitations.

https://steipete.me/posts/2026/openclaw

10. Multimedia Sources

AI-related discussions and analysis:

https://www.youtube.com/watch?v=Vx8Gua9tZe4

https://www.youtube.com/watch?v=v5H3bonLIeA

https://www.youtube.com/watch?v=BZ1hs2ZcnJc

All data used in this article is derived from publicly available institutional reports and industry analyses.

This article is for informational purposes only and does not constitute investment advice.

Mihail Gaydarov

Founder & Chief Financial Analyst.

Spas Arsov

Financial Analyst, M&A Specialist.

The Financier Review

© 2026 The Financier Review. All rights reserved.